Killer Robots: Should or Even Can They Be Banned?

- Dr. Timothy Smith

- Sep 26, 2018

- 3 min read

Updated: Jun 13, 2021

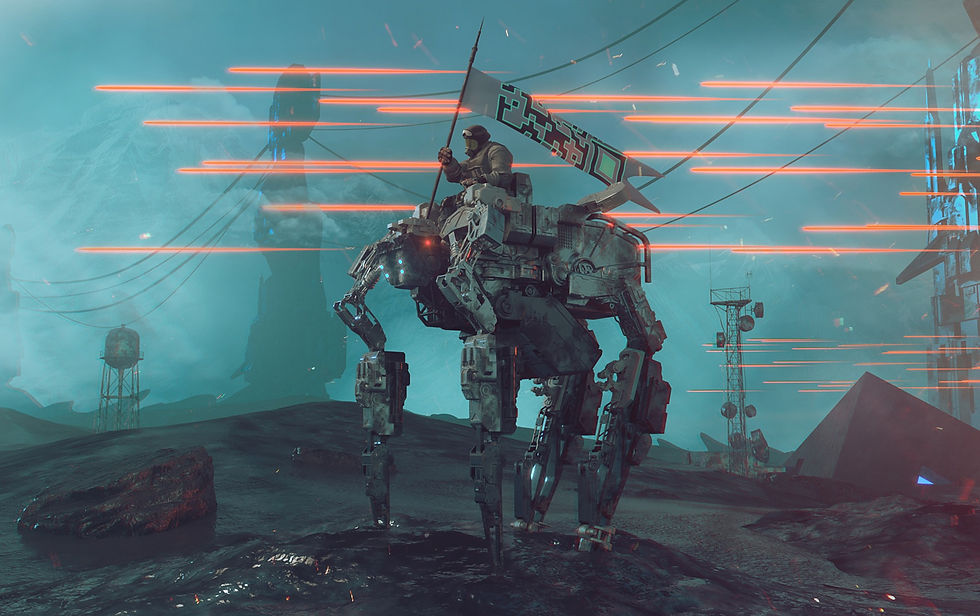

Photo Source: Pixabay

On September 12, 2018, the Ministers of the European Parliament voted in favor of a resolution that calls for the international ban on lethal autonomous weapons (LAWS) or more popularly referred to as “killer robots.” They defined lethal autonomous weapons as any machine that can take action to kill without a having a human approve the decision. According to the BBC, the resolution comes just before scheduled negotiations on the subject of LAWS at the United Nations due to take place in November of this year.

Advances in robotics and artificial intelligence have already transformed many aspects of society, commerce, and manufacturing. For example, the electric car company Tesla Inc. offers a feature in its cars that autonomously navigates the vehicle from one place to another without help from the driver. Of course, the driver, according to the company, should remain vigilant to retake control of the car if the autopilot makes a mistake that endangers the car or others on the road. The combination of advanced sensors, artificial intelligence, and mechanics makes Tesla cars essentially autonomous robots. At an even larger and more independent scale, the US Navy in collaboration the Defense Advanced Research Agency or DARPA developed and launched in 2016 a 132-foot-long, fully autonomous submarine tracking ship named Sea Hunter. According to Julian Turner in an article for Naval Technology, the autonomous craft can travel the ocean for up to ninety days hunting enemy submarines and avoiding other ships and obstacles without any intervention from human handlers. The vessel does not carry any weapons at this point.

Advances in artificial intelligence and robotics take armed robots out of the realm of science fiction and make them a reality today. Some argue that the missile defense systems such as Arrow 3 developed by the United States and Israel that automatically respond to incoming missiles already constitute as autonomous weapons systems. Moreover, the heavy use of drones in the war against terrorism in the Middle East still involves human operators, but the capability to make the drones independent appears imminent. The vision of a new, escalating arms race to build killer robots that can be sent into battle and fight without human intervention poses a genuine ethical dilemma. If some countries choose to ban the development and use of killer robots but others do not, it means that humans of one country will have to fight and die in battle against the invading robots while the aggressor’s sons and daughters stay out of harm's way, letting their robots wage battle.

History does show that the world can unite in banning certain weapons. After the devastation of World War I, the international community joined together in 1925 under the Geneva Protocol banning the use of but not the possession of chemical weapons. The agreement gained further strength in 1993 with the signing of the Chemical Weapons Convention banning the development, production, stockpiling and transfer of chemical weapons. Apart from recent events in Syria, nearly every country in the world has come into compliance with the treaty (armscontrol.org). But the agreement does not ban all lethal gases because many have industrial uses. For example, chlorine gas once used on the battle field has wide ranging industrial applications such as in the production of plastics, paper, medicines, and paints.

Recently, the European Union’s ministers voted to promote the international ban on lethal autonomous weapons. The vote comes just before the United Nations takes up the topic in November. Because of significant advances in artificial intelligence and robotics, the specter of killer robots looms large, and it does not take much to make the jump from autonomous vehicles such as cars and ships to weaponized vehicles and robots. The international community has shown that it can band together to prohibit certain weapons such as lethal chemicals and biological agents. However, such bans only work with near universal compliance. To date though, Russia, South Korea, the United States, and Israel have indicated that they will not participate in a ban on killer robots. Until all the countries come to the table and agree to ban lethal autonomous weapons, nation states will need to prepare for a new era in warfare.

Dr. Smith’s career in scientific and information research spans the areas of bioinformatics, artificial intelligence, toxicology, and chemistry. He has published a number of peer-reviewed scientific papers. He has worked over the past seventeen years developing advanced analytics, machine learning, and knowledge management tools to enable research and support high level decision making. Tim completed his Ph.D. in Toxicology at Cornell University and a Bachelor of Science in chemistry from the University of Washington.

You can buy his book on Amazon in paperback and in kindle format here.

Comments